Table of Contents

Context: Rapid integration of Artificial Intelligence in military systems has triggered a strategic competition among major powers, often compared to the early nuclear arms race.

AI Arms Race Between Countries

- United States: Rapid militarisation of AI through defence programmes

- launched Project Maven to apply AI to military intelligence analysis

- Allocated >$13 billion Pentagon budget for autonomous systems

- companies like Palantir, Anduril developing AI drones.

- China: State-driven civil–military fusion strategy integrating private tech firms with defence research

- Eg. China showcases AI-controlled military systems and drone brigades at Zhuhai Airshow.

- Russia: Development of autonomous loitering munitions and AI-guided drones tested in real combat (Lancet drones).

- Russia–Ukraine war becomes major testing ground for AI-enabled drones and autonomous weapons.

- Europe: Rearmament with AI-enabled air defence and anti-drone systems (Germany, France, Britain, Poland joint air-defence initiatives).

- Other Emerging Powers: Countries such as India, Israel, Iran, Turkey, Pakistan investing in AI-enabled military platforms (autonomous drones, decision-support targeting systems, cyber-AI warfare).

- Private Sector Role: Unlike nuclear weapons, the AI arms race heavily involves private technology firms and startups (Palantir, Anthropic, Anduril, defence tech startups).

Challenges in the AI Arms Race

- Lack of Global Regulation: No binding treaty governing autonomous weapons systems (unlike Nuclear Non-Proliferation Treaty or Chemical Weapons Convention).

- Autonomous Escalation Risks: AI-driven systems operate at machine speed, potentially triggering unintended military escalation (algorithm-triggered retaliation scenarios).

- Ethical Concerns: Delegating life-and-death decisions to machines raises humanitarian and legal questions.

- Proliferation Risks: AI technology is widely available, enabling smaller states and non-state actors to develop autonomous weapons (cheap drones used in Ukraine war).

- Dual-Use Technology: AI is a general-purpose technology (similar to electricity or computing), making regulation difficult because civilian tools can be adapted for military use.

- Security Dilemma: Countries accelerate development due to fear of technological disadvantage, creating a self-reinforcing arms race.

- Private Sector Dependence: Military reliance on private technology companies creates issues of data security, corporate ethics, and strategic control.

Way Forward for Managing the Global AI Arms Race

- Global Governance Framework: Develop binding international rules for autonomous weapons (similar to Nuclear Non-Proliferation Treaty or Chemical Weapons Convention).

- Human Control Principle: Ensure meaningful human control over critical military decisions

- Eg. Similar to U.S.–China 2024 agreement to maintain human control over nuclear weapon decisions

- Transparency and Confidence-Building Measures: Promote information sharing, military AI norms and verification mechanisms (arms control dialogues, AI risk-reduction agreements).

- Ethical and Legal Standards: Integrate International Humanitarian Law principles (distinction, proportionality, accountability) into AI-enabled weapon systems.

- Technology Safeguards: Develop fail-safe mechanisms and algorithmic oversight systems to prevent unintended escalation or malfunction.

- Regulation of Private Sector Participation: Establish clear frameworks governing collaboration between defence agencies and technology companies (data protection, export controls).

- Global Export Controls: Strengthen controls on transfer of sensitive AI military technologies (similar to Wassenaar Arrangement for dual-use technologies).

- Multilateral Cooperation: Encourage cooperation through UN, G20, OECD and other international platforms to shape norms for military AI.

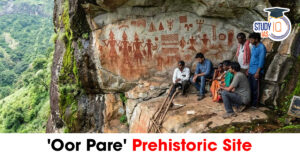

Oor Pare Prehistoric Site: Location, Roc...

Oor Pare Prehistoric Site: Location, Roc...

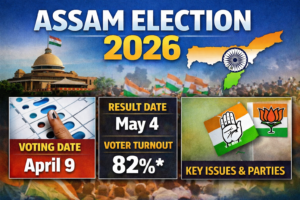

Assam Election 2026: Exit Poll, Result D...

Assam Election 2026: Exit Poll, Result D...

Great Indian Bustard (GIB): Features, Th...

Great Indian Bustard (GIB): Features, Th...